Geometry Operators in Joins vs. WHERE clause

I've noticed that most people when they do queries in PostGIS (I presume other spatial databases as well), seem

to put all there geometry relation (intersects, contains etc.) checks in the WHERE clause instead of the FROM clause of their

SQL statements whereas I tend to do the opposite.

I've always wondered if there is a speed advantage of doing it one way or the other so I decided to look at the 2 EXPLAIN ANALYZE plans

in pgAdmin for these two sample queries.

SELECT t.town, count(s.gid)as totschools

FROM towns t

INNER JOIN

schools s ON st_intersects(t.the_geom, s.the_geom)

GROUP BY t.town

SELECT t.town, count(s.gid) as totschools

FROM towns t, schools s

WHERE st_intersects(t.the_geom, s.the_geom)

GROUP BY t.town

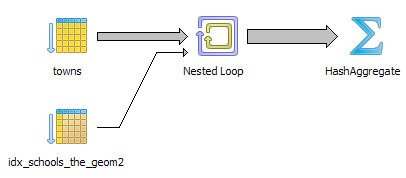

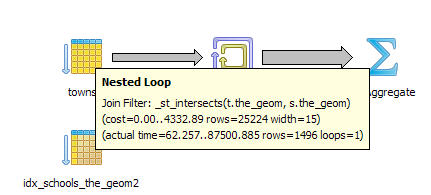

As I expected, the plans are identical and look like this.

I ran for a couple of other types of queries and got the same conclusions. Even for compound statements like the below, the explain analyze plans were identical and the timings if I run for enough iterations come out on average the same.

--Total runtime: 90699.236 ms

SELECT t.town, count(s.gid) as totschools

FROM towns t INNER JOIN schools s ON (st_intersects(t.the_geom, s.the_geom) AND s.grades LIKE '%K%')

GROUP BY t.town

/**HashAggregate (cost=4459.01..4463.40 rows=351 width=15) (actual time=90698.767..90698.995 rows=328 loops=1)

-> Nested Loop (cost=0.00..4332.89 rows=25224 width=15) (actual time=63.797..90681.218 rows=1496 loops=1)

Join Filter: _st_intersects(t.the_geom, s.the_geom)

-> Seq Scan on towns t (cost=0.00..131.41 rows=1241 width=8242) (actual time=0.010..1.966 rows=1241 loops=1)

-> Index Scan using idx_schools_the_geom2 on schools s (cost=0.00..3.37 rows=1 width=29) (actual time=25.623..66.418 rows=2 loops=1241)

Index Cond: (t.the_geom && s.the_geom)

Filter: (((s.grades)::text ~~ '%K%'::text) AND (t.the_geom && s.the_geom))

Total runtime: 90699.236 ms **/

--Total runtime: 92633.716 ms

SELECT t.town, count(s.gid) as totschools

FROM towns t , schools s

WHERE (st_intersects(t.the_geom, s.the_geom) AND s.grades LIKE '%K%')

GROUP BY t.town

/*

HashAggregate (cost=524.90..529.29 rows=351 width=16)

-> Nested Loop (cost=0.00..517.76 rows=1429 width=16)

Join Filter: _st_intersects(t.the_geom, s.the_geom)

-> Seq Scan on towns t (cost=0.00..25.51 rows=351 width=25602)

-> Index Scan using idx_schools_the_geom2 on schools s (cost=0.00..1.39 rows=1 width=29)

Index Cond: (t.the_geom && s.the_geom)

Filter: (((s.grades)::text ~~ '%K%'::text) AND (t.the_geom && s.the_geom))

*/

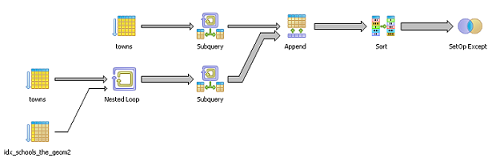

Visual plan for both

So the question is why is there a preference for putting these things in the WHERE and why do I prefer JOIN?

If you think about it, JOIN is kind of a weird concept that appeals mostly to database geeks - whereas WHERE is something most people deal with every day.

WHERE is more intuitive; Also let us not forget the nonconformist empire of Oracle and how in the olden days of Oracle, JOINS were

done in the WHERE clause with things like =+ = += etc., to do LEFT INNER RIGHT FULL JOINS and I suspect a lot of Oracle Database users still do that even though it violates the ANSI SQL Standard.

Now why do I prefer JOIN over WHERE for this kind of thing

- An INNER JOIN can be flipped over to a LEFT or RIGHT JOIN at the drop of a hat (give me all in set A even if there is no matching record in set B etc.) . There is no equivalent concept for WHERE except in Oracle. In the case above - yes should a town not have schools I probably want to know that and would change to a LEFT JOIN instead of having it left out of my result as would happen if I had the clause in the WHERE or INNER JOIN.

- If I have at least one JOIN condition for each table, I usually don't make the mistake of doing an accidental cartesian product by forgetting a WHERE condition. This is of course just me. Others may not have this problem.

Why ever ask WHERE?

Now while I do tend to make sure that I have at least one JOIN condition for each of my tables, I also use WHERE? If you can do LEFT RIGHT INNER

with JOINs and WHERE can only simulate INNER JOINS, why is WHERE ever important?

Well I can think of several cases where I would use WHERE in conjunction with

JOIN, some cases where I arbitrarily choose JOIN and no WHERE out of habit, and some where I just have a WHERE such as when I have a VIEW so I put the JOIN in the view and a where in my query against the view or I have a single table or my audience doesn't understand the concept of JOIN so its easier to just use WHERE. Below is an example where WHERE is a useful thing and can't be replaced with just a JOIN.

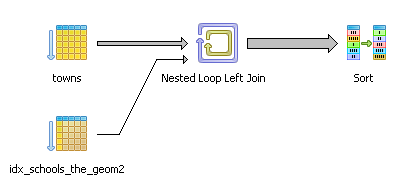

EXCEPTION Queries: Give me a list of all towns that have no kindergartens

--Total runtime: 90916.939 ms

SELECT t.town

FROM towns t

LEFT JOIN

schools s ON

(st_intersects(t.the_geom, s.the_geom)

AND s.grades LIKE '%K%')

WHERE s.gid IS NULL

ORDER BY t.town

Sort (cost=517.77..517.77 rows=1 width=12) (actual time=17839.682..17839.687 rows=23 loops=1)

Sort Key: t.town

-> Nested Loop Left Join (cost=0.00..517.76 rows=1 width=12) (actual time=37.866..17839.617 rows=23 loops=1)

Join Filter: _st_intersects(t.the_geom, s.the_geom)

Filter: (s.gid IS NULL)

-> Seq Scan on towns t (cost=0.00..25.51 rows=351 width=25602) (actual time=0.006..0.303 rows=351 loops=1)

-> Index Scan using idx_schools_the_geom2 on schools s (cost=0.00..1.39 rows=1 width=29) (actual time=6.792..29.123 rows=7 loops=351)

Index Cond: (t.the_geom && s.the_geom)

Filter: (((s.grades)::text ~~ '%K%'::text) AND (t.the_geom && s.the_geom))

Total runtime: 17839.764 ms

It is really quite hard to answer the above question without a WHERE and using a NOT IN(subselect) or EXCEPT clause to avoid a JOIN is in general slower in DBMSs I have tried. Not in all cases though.

As shown later below my NOT IN for this particular case beats out the LEFT 2 out of 3 times. This could have more to do with the fact that the planner has an easier time optimizing geometric INNER JOINS than LEFT JOINS.

What the above query is basically doing is

- Match up the towns with schools with kindergartens that fall in the town region and if no match, then create a placeholder (filled with all nulls where we would otherwise have school data) so that we always have at least one school record for each town in our result.

I like to think of this as creating an imaginary kindergarten for towns that can't afford a real one so that no town is left behind. Kind of like Boston's Silver Line affectionately called The Silver Lie

In a nutshell that is what a LEFT JOIN is. It makes the impossible possible by saying create a place holder in the second data set where there is no match.

- Now give me only a list of towns that have a non-existent kindergarten.

Now some may ask -

Q: Why can't you put the s.gid IS NULL in the LEFT JOIN clause and don't ask WHERE?

A: Because the LEFT JOIN is what creates the concept of a nonexistent kindergarten school so if you ask

for such a thing before the concept of such a thing exists,

you get all towns because there exists no real school that is imaginary. It doesn't take the planner long to figure out that there is no such school and to create a virtual school placeholder for all towns.

In fact if you run such a query, the planner will return all the towns to you and very quickly in fact because it completely ignores the

costly intersect check because it needs to do very little analysis to determine that doing an expensive intersect check is more costly than taking the obvious that a primary key (s.gid can not have nulls if it actually exists or a real school is never imaginary).

Its kind of strange that in most DBMSs I have tried, doing a left is in general faster than doing a NOT IN or EXCEPT. My guess for this reason is

a LEFT takes in general less memory and sorting power to process. This varies depending on datasets and the properties of your dataset.

Think about the case of if the two sets of geometries intersect I can immediately throw out those results because looking forward I know I really don't care about towns that have kindergartens so as soon as I see such a thing - I throw it away.

So while I need to process it, I immediately throw this information away. In fact think about it - I never asked what schools it has - so as soon as I see a town with a kindergarten - I could care less about it.

I never have to consider this town again or join it or order it with my other towns.

I do not need to remember it.

If I do a NOT IN or EXCEPT I first have to ask which towns have kindergartens and then which towns are not in the set of towns that have kindergartens.

MORE MEMORY and processor needed for sorting in general because I need to know the towns that have kindergartens to compare with my full town set to know the towns that don't have kindergartens. Well its not quite that simple for NOT IN and EXCEPT, but almost because the planner sees these as separate distinct questions instead of in the

case with the LEFT WHERE where it perceives that as one question.

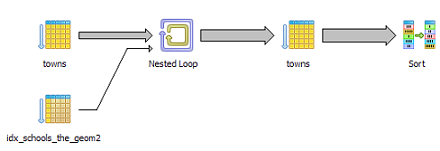

Below is the same question - asked with a NOT IN.

Note: We are asking who is in the list (our inner question) and who is not in the list that we just generated.

SELECT t.town

FROM towns t

WHERE t.gid

NOT IN(SELECT t.gid

FROM towns t, schools s

WHERE (st_intersects(t.the_geom, s.the_geom) AND s.grades LIKE '%K%'))

ORDER BY t.town

/** Sort (cost=554.28..554.72 rows=176 width=12) (actual time=17804.008..17804.014 rows=23 loops=1)

Sort Key: town

-> Seq Scan on towns t (cost=521.33..547.72 rows=176 width=12) (actual time=17803.827..17803.977 rows=23 loops=1)

Filter: (NOT (hashed subplan))

SubPlan

-> Nested Loop (cost=0.00..517.76 rows=1429 width=4) (actual time=7.226..17800.803 rows=1496 loops=1)

Join Filter: _st_intersects(t.the_geom, s.the_geom)

-> Seq Scan on towns t (cost=0.00..25.51 rows=351 width=25594) (actual time=0.001..0.290 rows=351 loops=1)

-> Index Scan using idx_schools_the_geom2 on schools s (cost=0.00..1.39 rows=1 width=25) (actual time=6.856..29.051 rows=7 loops=351)

Index Cond: (t.the_geom && s.the_geom)

Filter: (((s.grades)::text ~~ '%K%'::text) AND (t.the_geom && s.the_geom))

Total runtime: 17804.101 ms ***/

Same question with EXCEPT - return all towns except those that have kindergartens

Same question with EXCEPT - return all towns except those that have kindergartens

SELECT t.town

FROM towns t

EXCEPT

SELECT t.town

FROM towns t ,schools s

WHERE (st_intersects(t.the_geom, s.the_geom)

AND s.grades LIKE '%K%')

ORDER BY 1

/**SetOp Except (cost=657.16..666.06 rows=178 width=12) (actual time=20962.974..20964.141 rows=23 loops=1)

-> Sort (cost=657.16..661.61 rows=1780 width=12) (actual time=20962.956..20963.398 rows=1847 loops=1)

Sort Key: town

-> Append (cost=0.00..561.07 rows=1780 width=12) (actual time=0.007..20958.763 rows=1847 loops=1)

-> Subquery Scan *SELECT* 1 (cost=0.00..29.02 rows=351 width=12) (actual time=0.006..0.364 rows=351 loops=1)

-> Seq Scan on towns t (cost=0.00..25.51 rows=351 width=12) (actual time=0.005..0.178 rows=351 loops=1)

-> Subquery Scan *SELECT* 2 (cost=0.00..532.05 rows=1429 width=12) (actual time=7.062..20957.331 rows=1496 loops=1)

-> Nested Loop (cost=0.00..517.76 rows=1429 width=12) (actual time=7.061..20955.576 rows=1496 loops=1)

Join Filter: _st_intersects(t.the_geom, s.the_geom)

-> Seq Scan on towns t (cost=0.00..25.51 rows=351 width=25602) (actual time=0.001..0.293 rows=351 loops=1)

-> Index Scan using idx_schools_the_geom2 on schools s (cost=0.00..1.39 rows=1 width=25) (actual time=6.721..28.799 rows=7 loops=351)

Index Cond: (t.the_geom && s.the_geom)

Filter: (((s.grades)::text ~~ '%K%'::text) AND (t.the_geom && s.the_geom))

Total runtime: 20964.237 ms **/

One reason why it takes less memory to do a LEFT JOIN is what makes database programming a little harder to understand than standard procedural logic. Remember I stressed the idea of concepts verses reality. Sure the idea of a school that doesn't actually exist is kind of silly but its a useful model and allows us to do one

very important thing - IRRADICATE INCONVENIENT EXCEPTIONS. All my towns now have kindergartens (sort of) so I am no longer asking a question I have no data for. The fact that some of these kindergartens are imaginary and that I am now on the hunt for towns with imaginary kindergartens is inconsequential.

I have irradicated this inconvenient exception that forces me to ask, Which towns have kindergartens, a question I could care less about.

Although JOINS happen conceptually before WHERE, in reality they don't need to as long as the planner can guarantee the process of solving things out of order mimicks the conceptual model. In reality they never need to happen at all if reality can mimick concept.

For example if you had in your WHERE clause towns.town LIKE 'ZZ%' and you have an index on town, your JOIN would most likely never happen because the database planner would run the WHERE part first since its less costly and would not affect the results (violate the conceptual model).

It would then quickly realize no such Town exists and therefore the result is the empty set and doing a join will not change this result.

Its actually a wonderful hack that you can state a problem, see annoying exceptions to the rule and quickly concoct conceptual models that destroy these nasty exceptions so you can treat everything the same.